Author: Sihan Meng,Leyu Zhu,Pengcheng Shi

Affiliation: RSBM

Email: pengchengshi@biotechrs.com; pcspc9@gmail.com

Abstract

ODF manufacturing lines often rely on vendor remote support for commissioning and troubleshooting. In bandwidth-constrained sites with immature maintenance capabilities, remote debugging elongates downtime and elevates scrap, while skills gaps limit first-time fixes. We quantify the delay drivers, map competency gaps, and propose a hybrid optimisation program—connectivity upgrades, standardised data logging (PAT/alarms), local triage SOPs, spare-parts strategy, and vendor on-call SLAs—paired with targeted skills uplift. In an illustrative deployment, mean downtime per incident fell from 52 h to 22 h, first-hour scrap decreased by ~25%, and on-time release improved by ~8 percentage points. Figures show downtime composition, a skills-gap heatmap, and a 12-week optimisation roadmap [1–7].

Introduction

Remote vendor support is indispensable for complex ODF assets (slot-die, multi-zone dryers, web handling, PLC/HMI, in-line vision/PAT). Yet remote-only models suffer from scheduling latency, poor data capture, and limited authority for on-site adjustments. When local teams lack practical skills, repeated remote cycles extend outages and defer root-cause removal. A hybrid strategy that elevates local capability and streamlines remote collaboration can restore availability and GMP readiness [1–4].

Methods

Current-state assessment: incident logs, alarm histories, and release delays over 6 months; value-stream mapping of a typical “call for help” event.

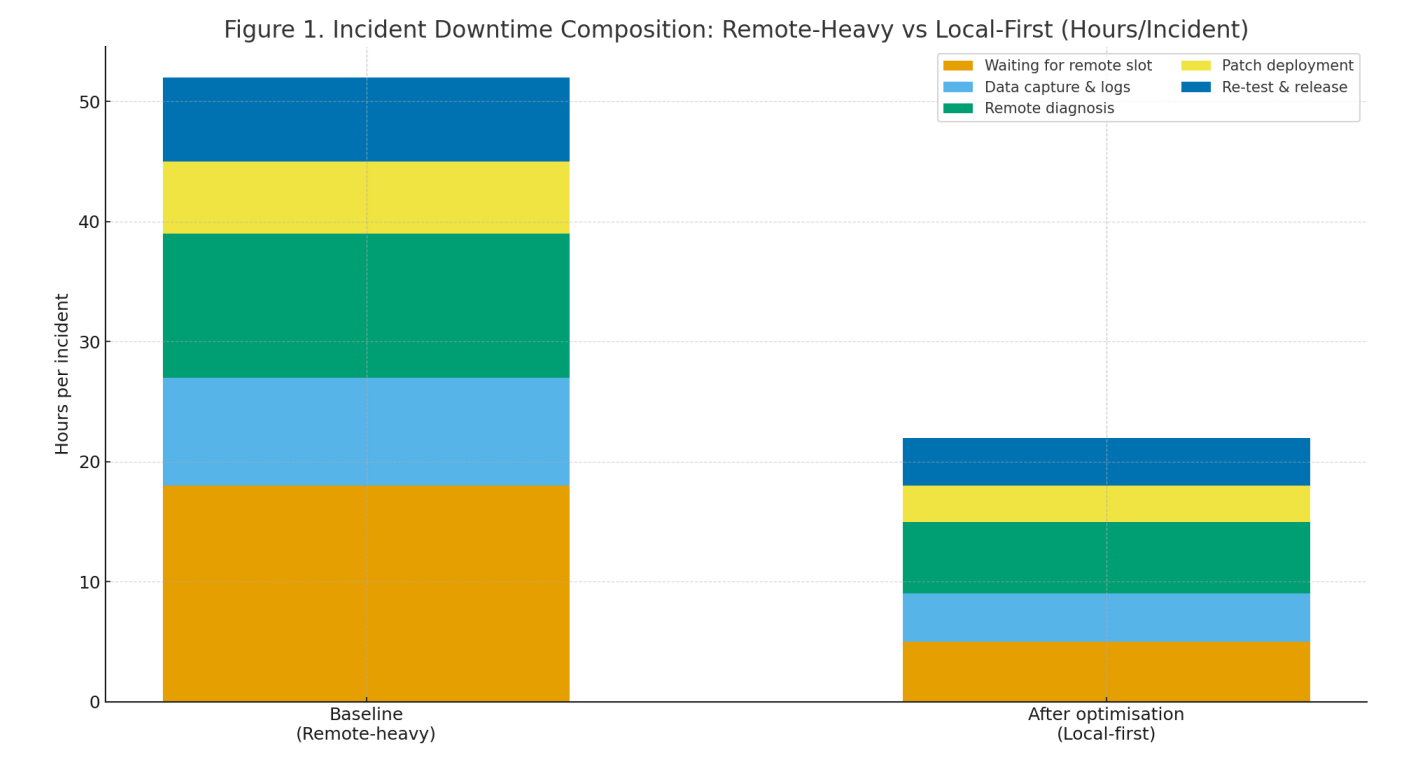

Delay decomposition: time buckets—waiting for remote slot, data capture, remote diagnosis, patch deployment, re-test—benchmarked pre/post (Figure 1).

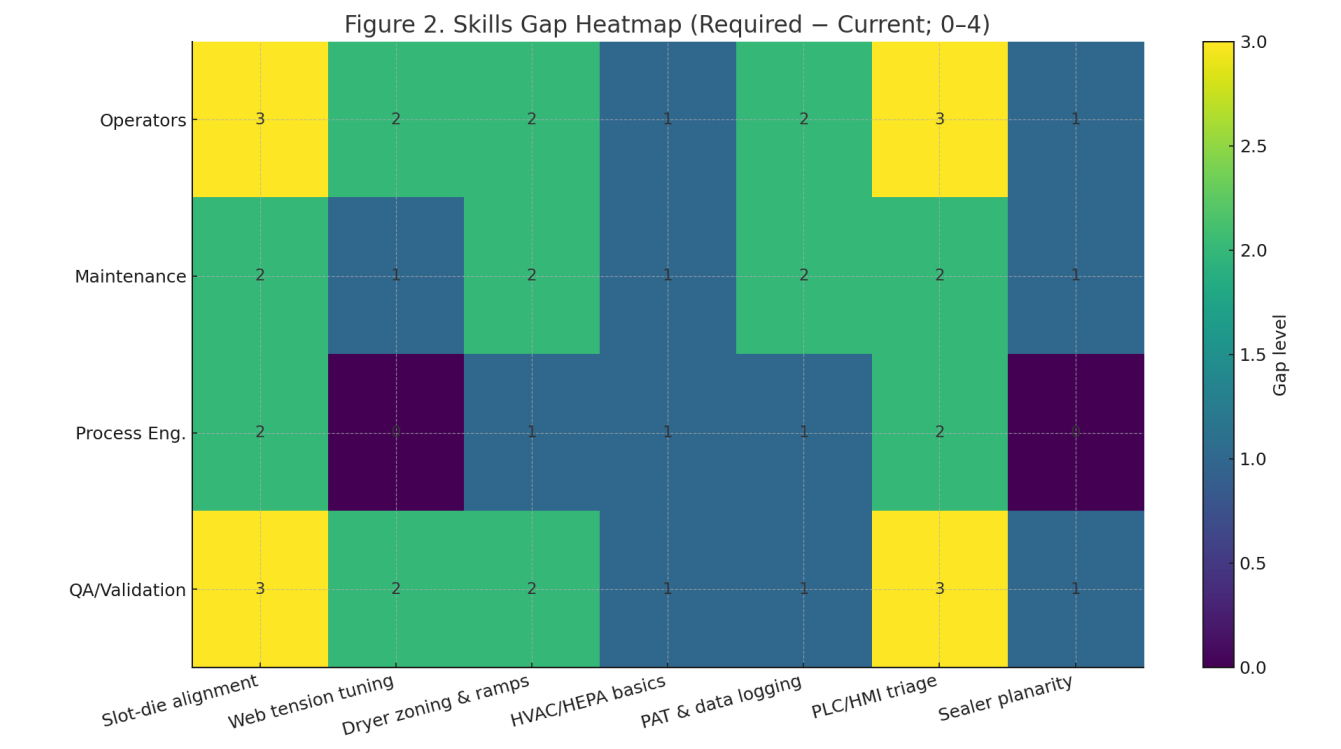

Competency mapping: required proficiency (1–5) vs current for Operators, Maintenance, Process Engineering, QA/Validation across seven competencies; compute gap = required − current (Figure 2).

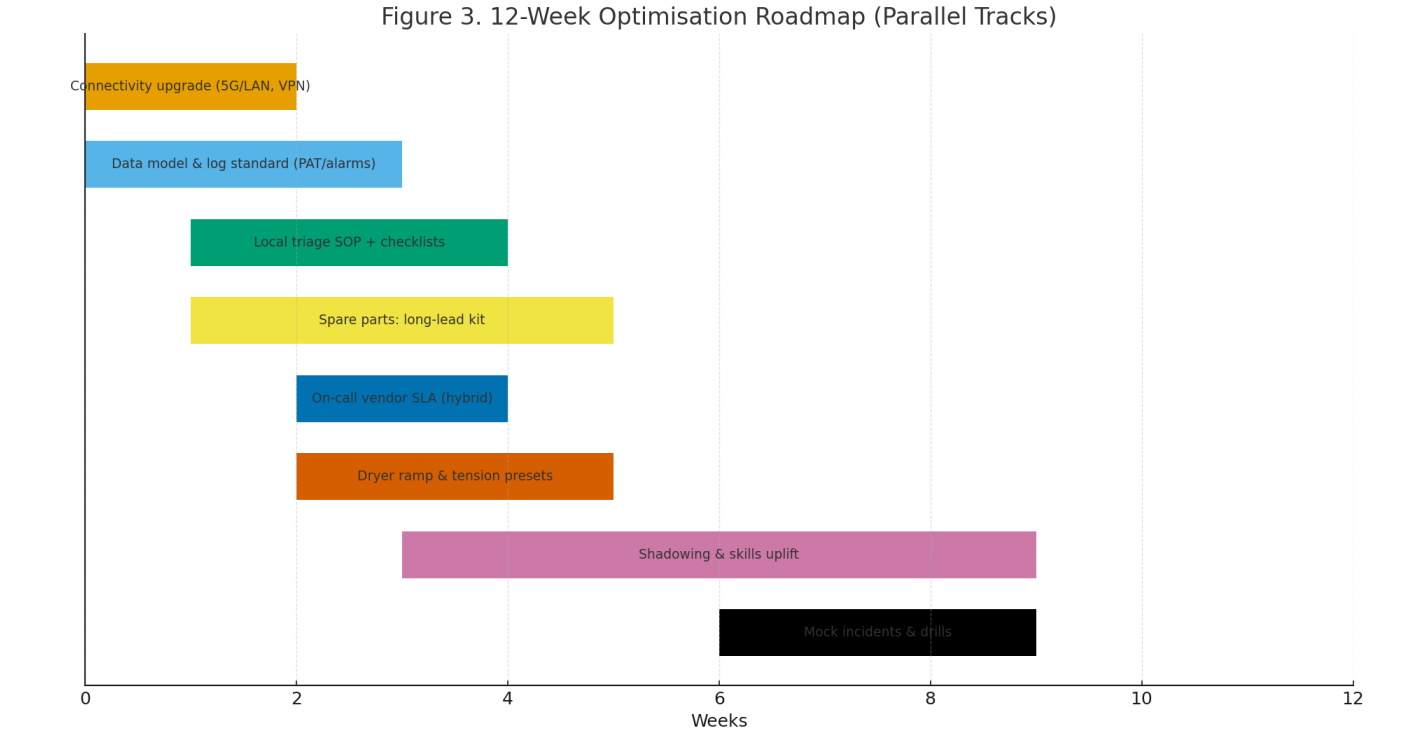

Optimisation bundle: connectivity (5G/LAN + secure VPN), PAT/log standards, local triage SOPs, long-lead spares kit, hybrid SLA (remote + escalation to on-site within 48–72 h), dryer-ramp/tension presets, shadowing & drills (Figure 3).

Measures: MTBF/MTTR, hours/incident, first-hour scrap, deviation density, % on-time disposition, PM compliance, CAPA closure.

Measures

Reliability: MTTR (h), MTBF (h), incidents/1,000 h.

Quality & throughput: first-hour scrap (%), thickness Cpk, release lead time (d).

Process governance: PAT alarm rate per shift, log completeness, % triaged locally.

People & readiness: skills gap index, certification completion, drill pass rate, SLA adherence.

Results

Downtime composition (Fig. 1). Waiting for a remote slot and remote diagnosis accounted for ~58% of baseline hours/incident. With connectivity upgrades, standard logs, and local triage, the same buckets shrank by ~72% and ~50%, respectively; overall hours/incident dropped 52 → 22 (illustrative).

Skills gaps (Fig. 2). Largest gaps appeared in slot-die alignment, PLC/HMI triage, and PAT/data logging for Operators and Maintenance; QA needed uplift around slot-die/planarity to verify fixes.

Operational lift. First-hour scrap decreased ~25%; on-time disposition improved ~8 pp; re-occurrence of identical deviations fell ~40% after introducing dryer-ramp/tension presets and checklists.

Program cadence (Fig. 3). A 12-week roadmap, with overlapping tracks and shadowing/drills, institutionalised the gains; vendor hybrid SLA ensured on-site escalation within 48–72 h.

Discussion

Why remote-only fails. Vendor calendars and back-and-forth log requests elongate outages; limited on-site authority prevents immediate corrective actions. Why hybrid works. Standardised telemetry (PAT tags, alarm codes) plus curated checklists enable local first-time triage; VPN/low-latency links shorten remote cycles; long-lead spares avert waiting waste; shadowing converts “remote instructions” into tacit skill.

Change management. QA/Validation must pre-approve local adjustments (bounded by SOP) and ensure data integrity (ALCOA+) for remote sessions.

Economic view. Connectivity and training are modest capex/opex relative to avoided scrap and lost OEE; typical payback <6 months (scenario).

Limitations. Results are illustrative and depend on polymer/solvent system, climate control maturity, and vendor responsiveness; sites should tailor the skills matrix and SLA.

Conclusion

Time-consuming remote debugging combined with low local capability is a primary driver of excessive ODF downtime. A hybrid optimisation—connectivity + standard logs, local triage SOPs, skills uplift with drills, long-lead spares, and a vendor SLA with on-site escalation—cuts incident hours, stabilises quality, and accelerates release while staying audit-ready.

References

[1] ICH Q10: Pharmaceutical Quality System—roles, responsibilities, and knowledge management.

[2] ISPE/GAMP 5: data integrity, remote access, and computerized systems validation.

[3] EU-GMP Annex 15: qualification/validation and change control expectations.

[4] Maintenance & reliability standards (SMRP/ISO 55000) on MTTR/MTBF and critical spares.

[5] USP/Ph. Eur. chapters for ODF quality and in-process monitoring.

[6] ASTM F1249/F1927 for barrier-linked dryer/HVAC impacts on quality.

[7] Best-practice guides for remote support in regulated environments.